Micronaut VS pure groovy AWS Lambdas

Contents

- “Have you seen this new serverless framework - Micronaut?” - said once my colleague.

- “Yea, but this is just a framework, it is not quicker than writing pure light function if in the same Groovy language.” - responded I.

- “Yes, but it uses AST transformation and a lot of things moved to the compile time.” - he said.

This conversation was the source of the idea to compare two simple AWS Lambdas: written as a groovy class and the groovy function in Micronaut.

I have to say, that this is not quite legal to compare framework based function with simple frameworkless. But, even in this comparison could be found some interesting conclusions:

- What actually impact has Micronaut on cold start time and warm run?

- During he experiment we can check X-Ray - the tool for tracing serverless services in AWS.

- Solid proof for my colleague :)

The full examples of both functions could be found here: MN-Lambda and Groovy-Lambda.

Project setup

Both functions will be a non-proxy AWS Lambda function written in Groovy.

The trigger will be a simple AWS API Gateway with one query parameter name. Both functions will just

great the name.

To load the api with more then just a manual request I use quick script from artillery. (Nice project by the way, my respect)

Micronaut setup

Let’s get started from Micronaut function. The framework provides a lot of cool features from the box, which could be needed for microservice application. One of the features - creating the template of simple groovy function, using the cli command:

|

|

Of course, you need to setup Micronaut cli, but after installing it, most of the routine task become that easy. I like all these infrastructural/scafollding things, which are coming with Micronaut. They help to create a simple projects in seconds, indeed. This makes an impression as a lightweight framework, friendly to the beginners. All that said, I should warn you that this is only the first impression. I doubt, that you are going to create dozens of new microservice projects every day, most likely you will use this fancy cli during the project setup, and then you’ll just code. And that could really neglect all the advantage of it.

So, let’s add some code to the groovy-function, we just created in mn.helloworld.MnHelloWorldFunction:

|

|

Let’s build and deploy to the AWS Lambda service. I will deploy it manualy to keep it simple. If you want to check how the more advanced way of deploying Lambdas looks like, check the Stage Vars article.

First, building the jar:

|

|

You can find built jar here \build\libs\mn-hello-world-0.1.jar.

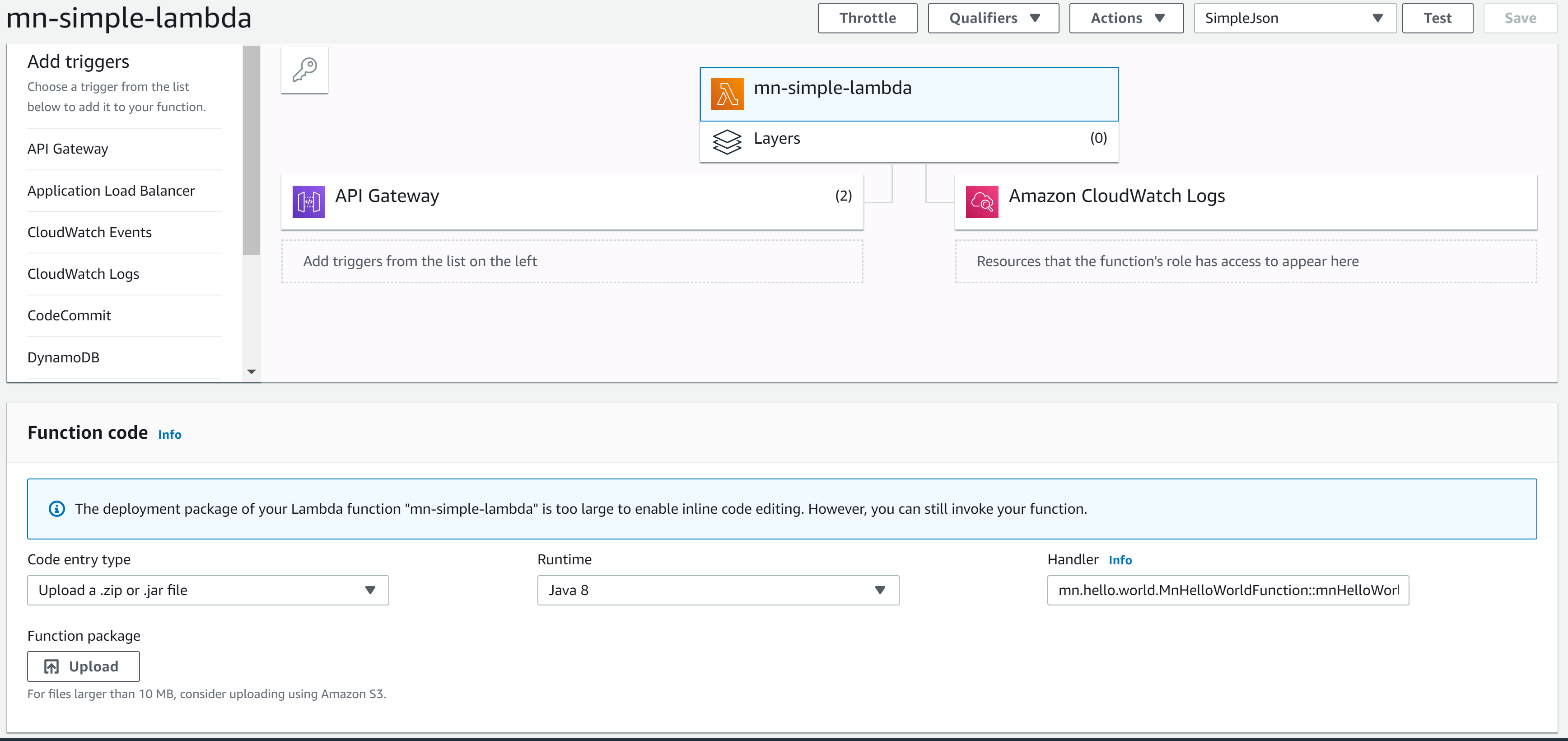

We in AWS Lambda we are uploading this jar as java function and in handler

write this mn.hello.world.MnHelloWorldFunction::mnHelloWorld, click save and we are done.

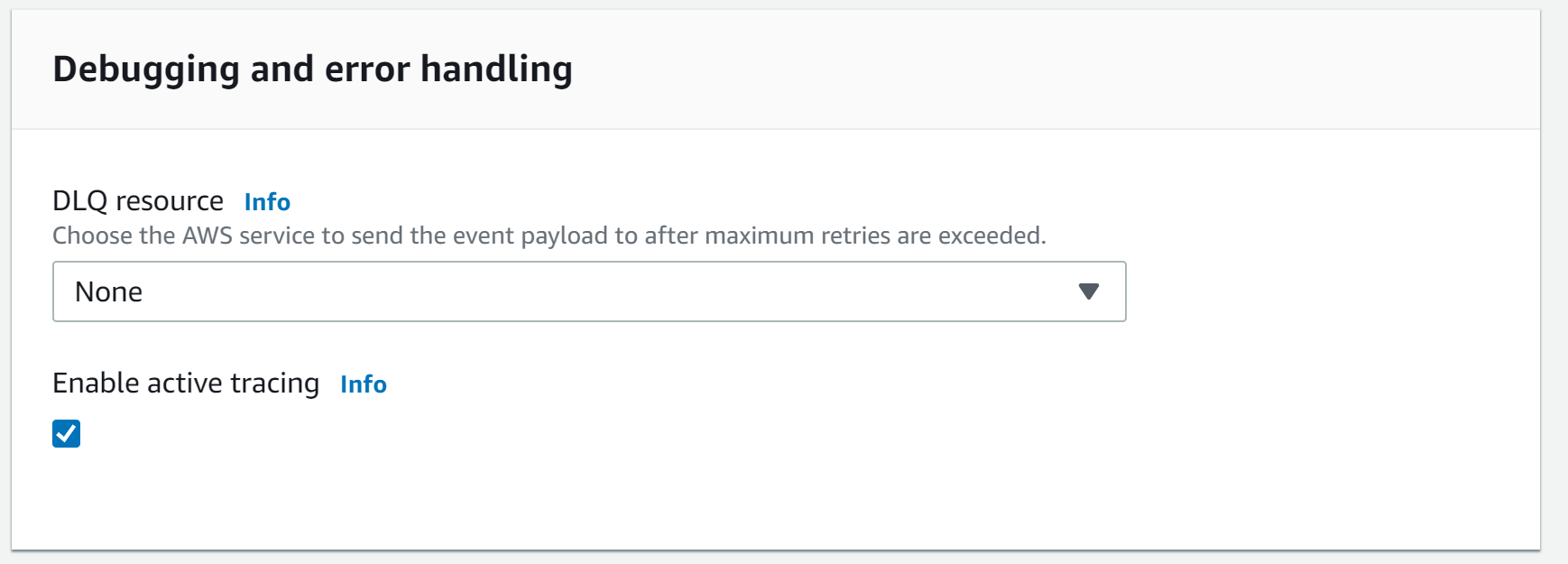

Do not forget to enable active tracing in debugging and error handling section.

More about this later in this article.

The last but not least, let’s create an AWS API Gateway for our micronaut lambda. Click API Gateway in a sidebar menu. Keep default settings for the API and save it. Follow the link to the AWS Gateway service to configure newly created API.

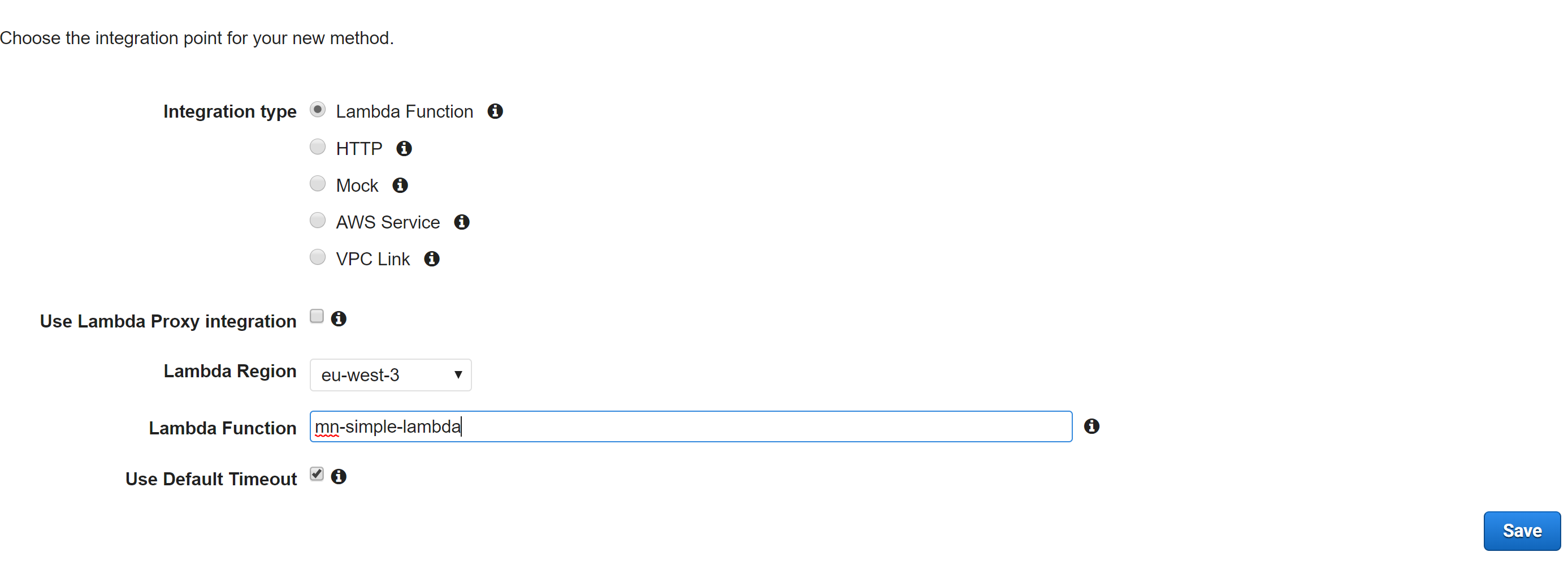

Remove default ANY method and add your own GET method referred to the Lambda function.

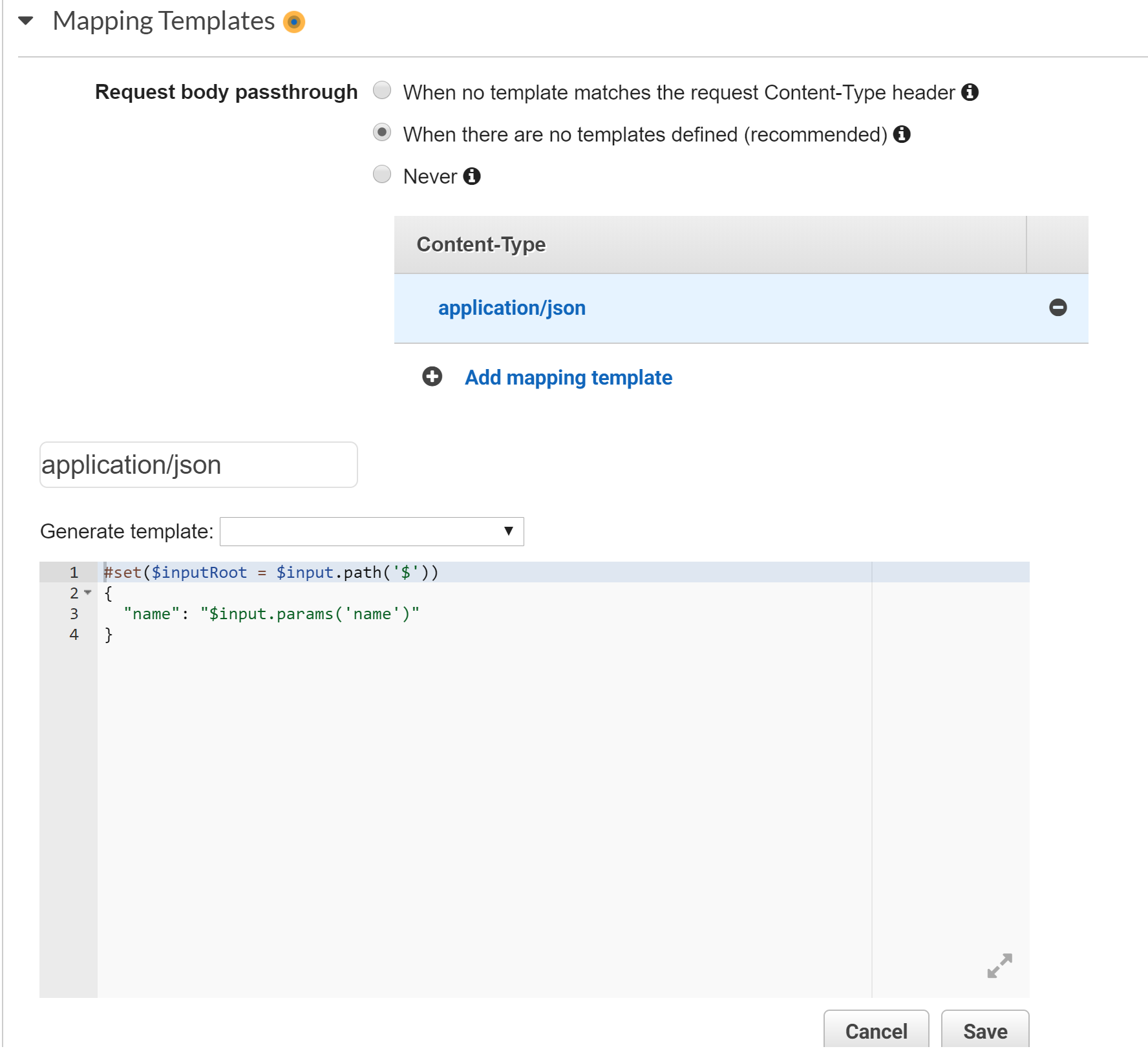

Next, let’s configure query param name:

- go to Method Request and add query string with the name ‘name’

- then go to the Integration request and add:

and

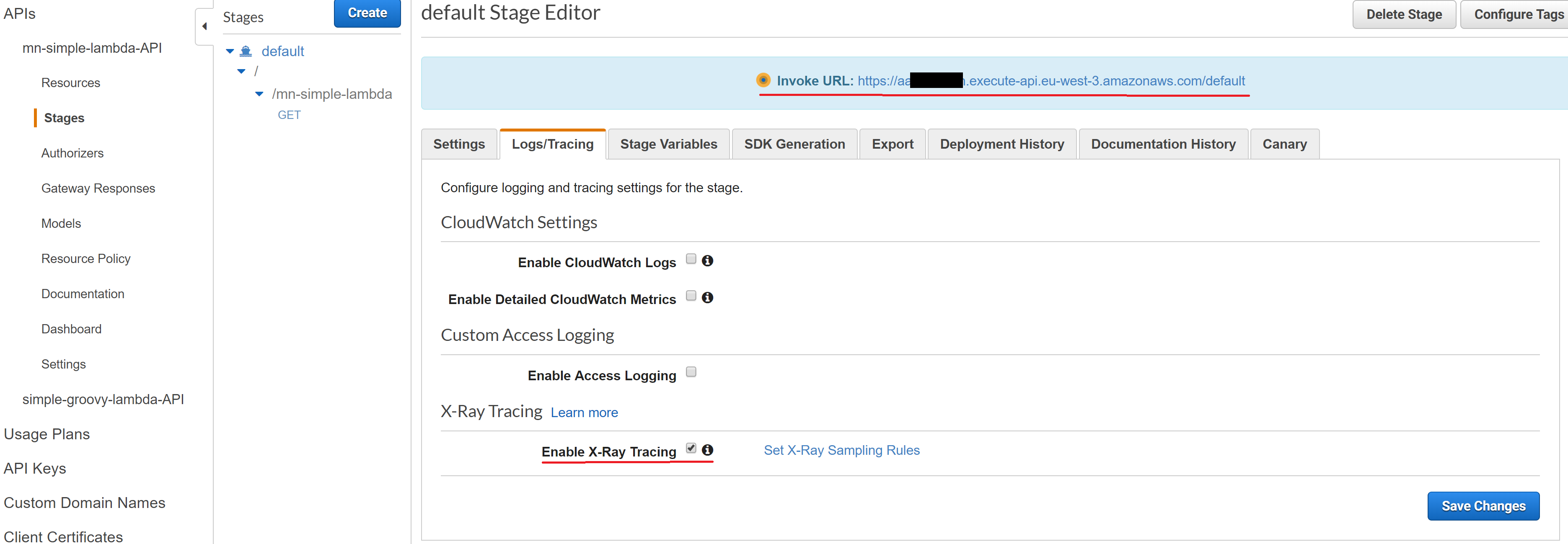

Also, in go to the Logs/Tracing section in the stage page and enable the X-Ray tracing.

OK, now we are ready to deploy the API to the default stage, click Actions, Deploy.

In a stage detail, you will find the link to the deployed api, which is backed with our lambda. Goto the link and add some query string, for example:

|

|

the response will be:

|

|

Ok, our Micoronaut lambda function setup is done.

Pure groovy lambda setup

Now it’s time for groovy. Create a groovy project in idea, or just use the repository,

and add to the build.gradle next dependency:

|

|

and also we will need a task for assembling a fat jar with a groovy and AWS library dependencies included:

|

|

The class itself looks the same as the Micronaut example:

|

|

To deploy our pure groovy lambda we will use the same approach as for Micronaut lambda:

Assembling the jar:

|

|

Then do all the same things: create lambda of java8 type, upload jar, enable trace, create an AWS API Gateway with query parameter. The steps in aws console, literally the same, only jar is different.

X-Ray tracing

Let’s make some load to our API services and compare the performance.

|

|

and

|

|

We simulated 10 users making 20 request each to the specified urls.

I am using Amazon X-Ray service, it allows us to see the whole chain of invocation - trace indeed the chain.

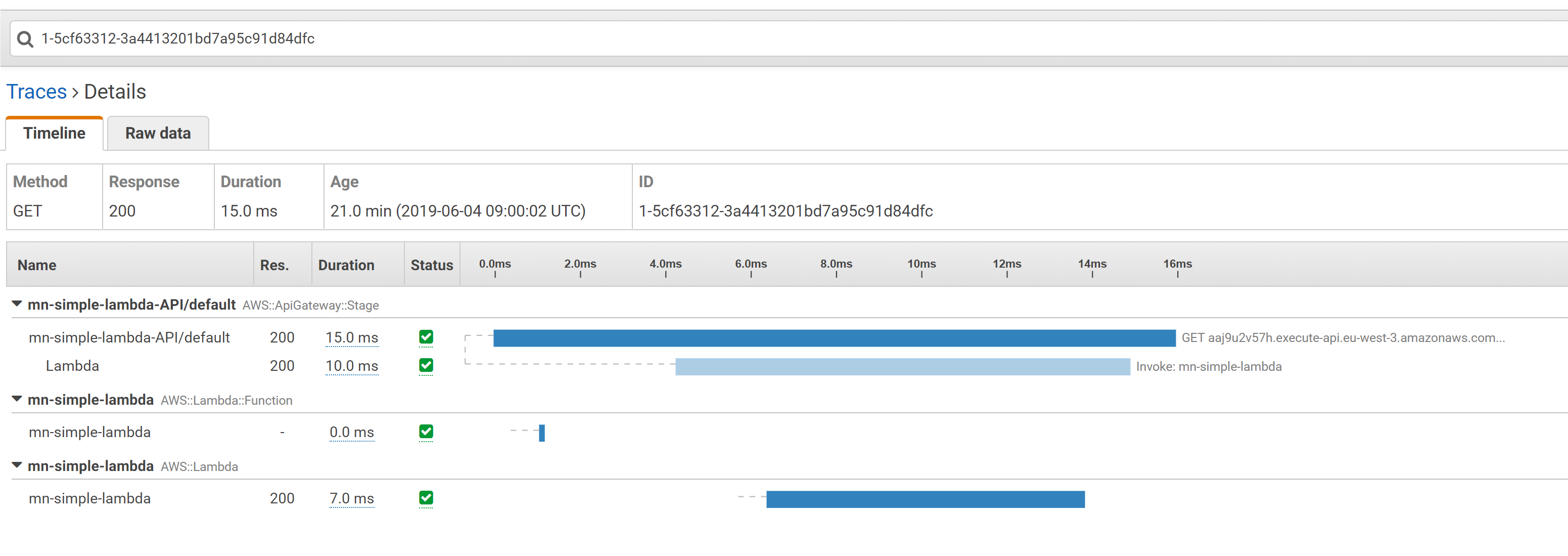

Every lambda+API has its own chain. Now let’s take a look how this loading looks like in Amazon X-Ray service:

The average values include warm and cold executions. Let’s check both traces for each lambda.

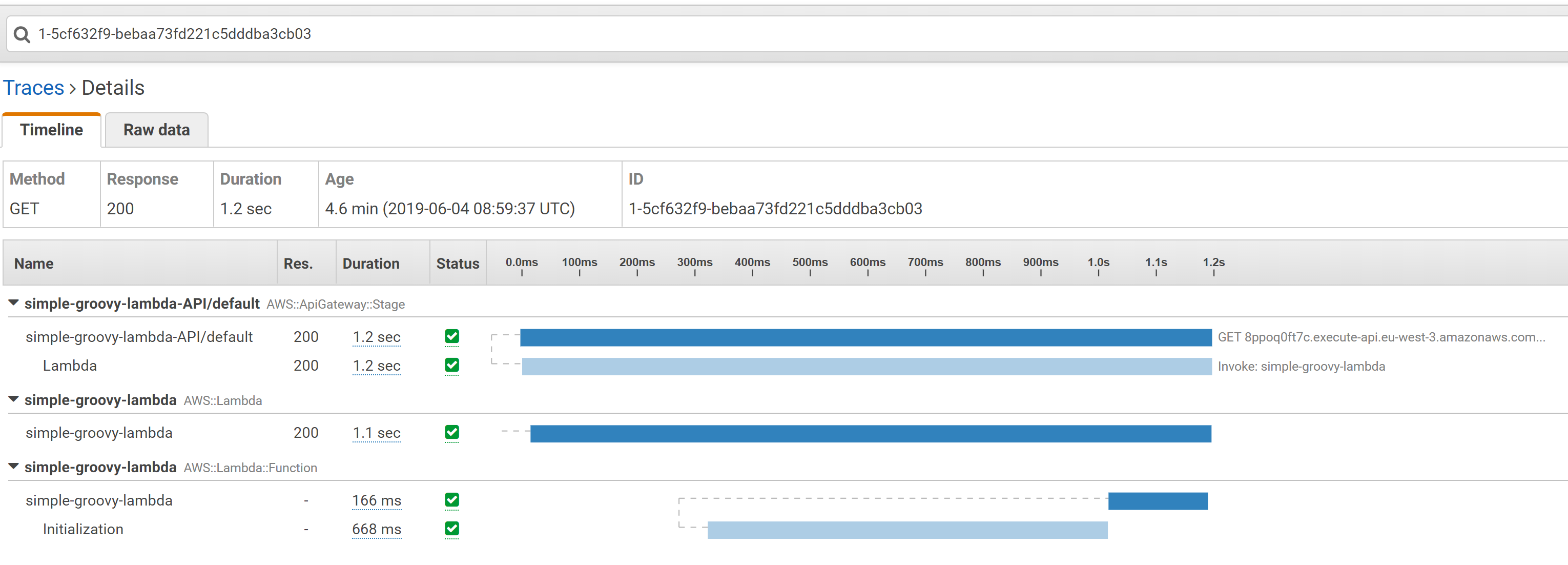

The trace for groovy lambda with initialization:

The trace for groovy lambda without initialization:

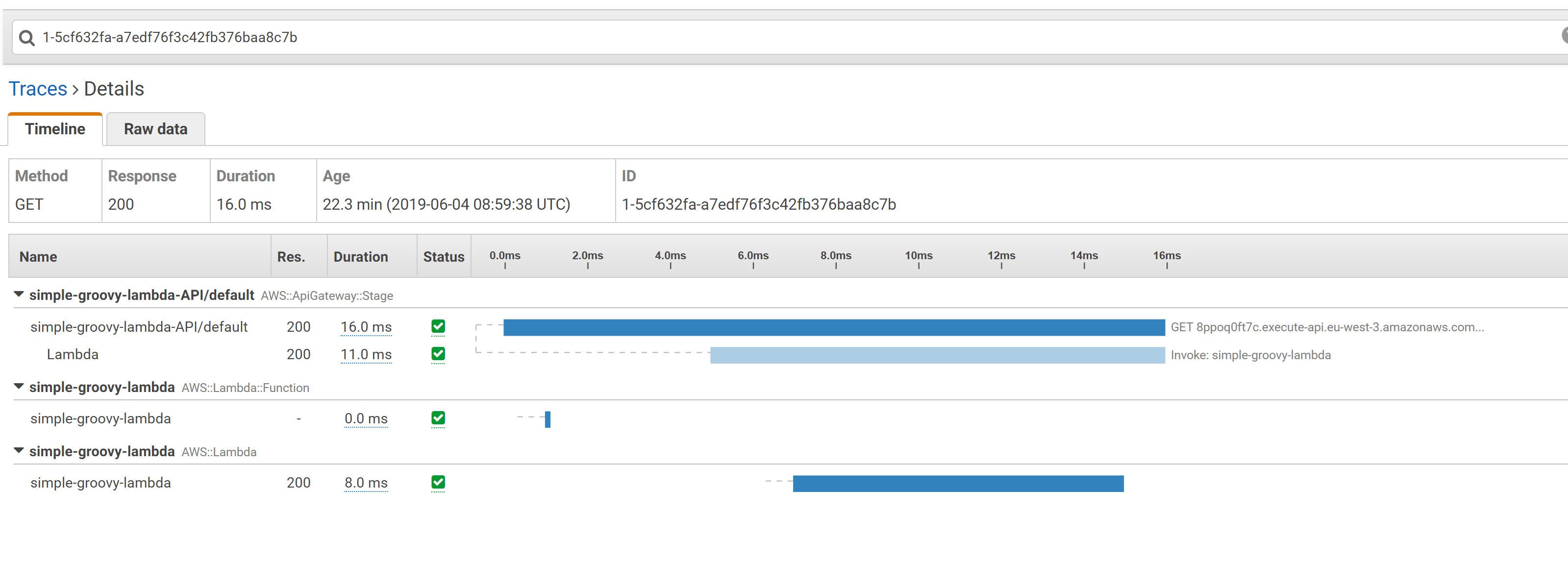

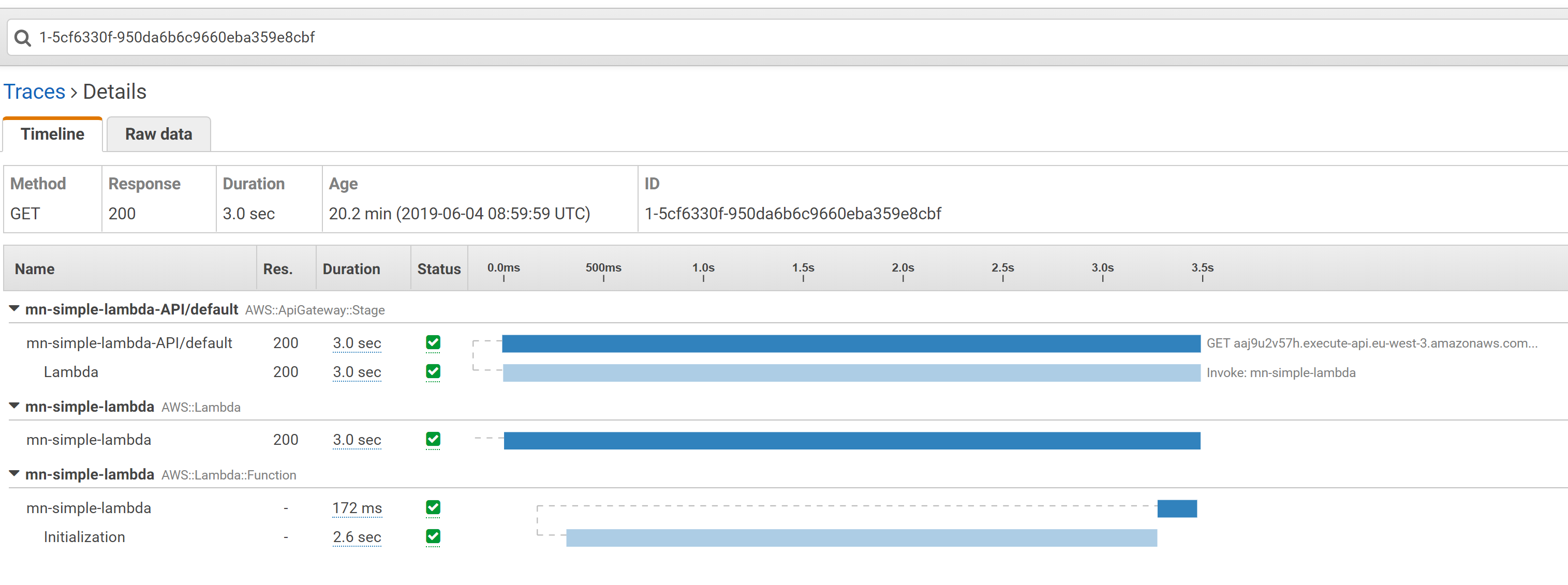

Trace for Micronaut lambda with initialization stage:

Trace for Micronaut lambda without initialization:

The results:

| Lambda | init time, ms | pure lambda exec time, ms |

|---|---|---|

| Groovy | 688 | 8 |

| Micronaut | 2600 | 7 |

We can see that the initialization time of pure groovy much less than that for Micronaut, that what was expected actually. But the hot run of Micronaut framework was slightly faster that groovy’s one.

Conclusion

Ok, what can we learn from that? First of all, no - Micronaut, of course, is not faster then pure groovy. That 1 ms of hot run, I am pretty sure,

could be easily covered by @CompileStatic annotation in pure groovy. At the same time, as for the initialization, the difference is really huge: 688 ms vs 2600 ms.

Of course, there is almost nothing initialize in groovy. Even the jar itself twice lighter than for Micronaut application: 6 MB vs 12 MB.

But wait a second, the initialization is the main point of the Serverless technology, and Micronaut also presents it as its advantage (in comparison with old-school Spring framework).

Micronaut provides a really cool features, like service-discovery, rate limits and etc, out of the box, but these features exist also in a slower brother - Spring Cloud. If cold startup is not an advantage anymore, then what the point to learn new annotations and framework? Moreover, Micronaut advantage in this case becomes its disadvantage. Cold startup time is being achieved by means of AST transformations in Micronaut, it means that it is highly unwelcome for you to get to the insights of the framework, debugging it, extending, contributing in the end, espesially in comparison with Spring, where everything hepens in runtime and you can find answer to any question just pouting the breakpoint in IDE.

In my opinion, microservices in AWS easily could be written in pure frameworkless way. You can write hundreds of these functions, handling each one particular business-task. You can write each of them in any language - Java, JavaScript, Groovy, Go, whatever you think better fit to the function purpose. Amazon services provides a lot of tools helping to orchestrate functions, trace, deploy and scale them without a need to put it all under one framework. May be I am wrong, who knows…

Author Relaximus

LastMod 2019-06-04